From the earliest days of cinema, filmmakers have pushed the boundaries of what’s possible on screen. Movie special effects have transformed from simple camera tricks into sophisticated technological marvels that blur the line between reality and imagination. What began with painted backdrops and stop-motion animation has evolved into an industry where artificial intelligence now generates entire worlds.

This journey through visual effects history reveals how innovation and creativity have combined to create the breathtaking spectacles we see in modern cinema, fundamentally changing how stories are told on the silver screen.

Table of Contents

ToggleThe Golden Age of Practical Effects and Matte Paintings

In the early decades of filmmaking, special effects artists relied heavily on practical techniques and optical illusions. Matte paintings became one of the most important tools in a filmmaker’s arsenal, allowing artists to hand-paint elaborate backgrounds on glass panels that would be combined with live-action footage. These painted environments extended sets beyond what budgets would allow, creating sprawling cityscapes, alien planets, and fantastical landscapes.

Films like “The Wizard of Oz” and “Citizen Kane” showcased how traditional special effects techniques could transport audiences to impossible places. Stop-motion animation, pioneered by legends like Ray Harryhausen, brought creatures to life frame by painstaking frame. His work on films like “Jason and the Argonauts” demonstrated that movie magic didn’t require computers, just patience, skill, and artistic vision.

Miniature models and forced perspective shots also played crucial roles during this era. Directors could create the illusion of massive spaceships, towering buildings, or destructive explosions using carefully crafted scale models and clever camera positioning. These practical filmmaking techniques laid the foundation for everything that would follow.

The Digital Revolution in Visual Effects

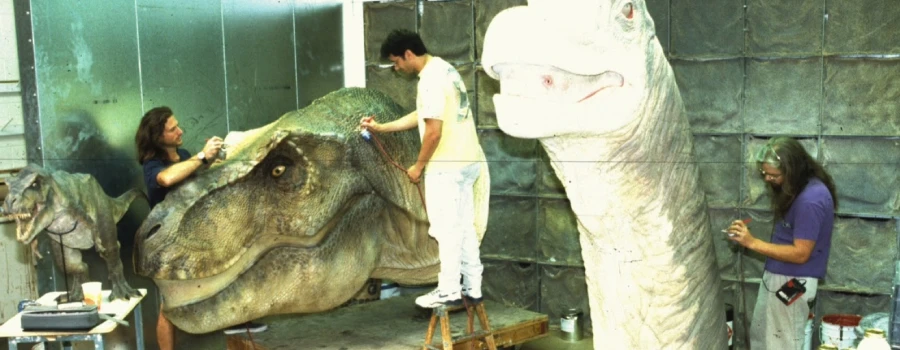

The introduction of computer-generated imagery in the 1970s and 1980s marked a seismic shift in how movie special effects were created. While early digital effects were primitive by today’s standards, films like “Tron” and “The Last Starfighter” demonstrated the potential of this new technology. The real breakthrough came with “Jurassic Park” in 1993, where Industrial Light & Magic combined CGI with practical effects to create photorealistic dinosaurs that still hold up today.

Digital compositing revolutionized the post-production process, making it easier to blend multiple elements into seamless shots. Green screen technology evolved alongside digital tools, allowing actors to perform in empty studios that would later become alien worlds or historical battlefields. Films like “The Matrix” pushed these boundaries even further, introducing bullet-time effects and virtual camera techniques that became instantly iconic.

Motion Capture and Performance Technology

Motion capture technology represented another quantum leap in cinematic visual effects. By recording actors’ movements and expressions with specialized sensors and cameras, filmmakers could translate human performances into digital characters. Andy Serkis’s groundbreaking work as Gollum in “The Lord of the Rings” trilogy proved that motion capture could deliver genuinely emotional performances, not just technical achievements.

This technology has since evolved to include facial capture and full performance capture, enabling directors like James Cameron to create entire alien civilizations in “Avatar.” The line between animation and live-action continued to blur as digital characters became increasingly sophisticated and lifelike. Modern films routinely feature digital humans that are nearly indistinguishable from their flesh-and-blood counterparts.

The Rise of Real-Time Rendering and Virtual Production

Recent years have witnessed another transformation in how special effects are created on film sets. Virtual production techniques, popularized by “The Mandalorian,” use massive LED walls displaying real-time rendered environments. This approach, often called StageCraft or the Volume, allows actors to perform within their actual environment rather than imagining it against a green screen.

Game engine technology has migrated from the gaming industry into filmmaking, enabling directors to see final-quality effects during filming rather than months later in post-production. This real-time rendering capability has fundamentally changed the creative process, allowing for more experimentation and immediate feedback. Lighting naturally reflects off actors from the LED screens, creating more realistic interactions between performers and their digital surroundings.

Key advantages of virtual production include:

- Immediate visualization of complex effects during shooting

- Reduced reliance on extensive post-production work

- More natural lighting and reflections on actors

- Greater creative flexibility for directors and cinematographers

- Cost savings on location shooting and travel

Artificial Intelligence and the Future of Movie Effects

Artificial intelligence now represents the cutting edge of special effects technology. Machine learning algorithms can perform tasks that once required armies of digital artists, from removing unwanted objects to de-aging actors. AI-powered tools can generate realistic environments, enhance visual quality, and even create entirely synthetic performances.

Deep learning networks can now generate photorealistic human faces, modify expressions, and seamlessly blend CGI elements into live-action footage. While this raises important ethical questions about authenticity and consent, the technology undeniably offers filmmakers unprecedented creative freedom. AI-assisted rotoscoping, automated color correction, and intelligent upscaling are already standard tools in modern visual effects pipelines.

One of the most striking demonstrations of AI’s disruptive potential came in February 2026, when ByteDance released Seedance 2.0, an image-to-video and text-to-video model hailed by many as the most sophisticated of its kind to date.

What makes Seedance 2.0 architecturally distinct is its multimodal constraint-based design. Rather than simply generating footage from a text prompt, it accepts up to twelve simultaneous input files, including images, video clips, audio files, and text, and uses all of them together to produce output that reflects every input as a constraint on the result. Users can reference motion, camera movements, characters, scenes, and sounds from any uploaded content, while the model maintains consistent faces, clothing, and visual styles across an entire video.

The model’s release sparked immediate controversy in Hollywood. Shortly after its release, realistic clips based on real actors, TV shows, and films went viral across the internet. SAG-AFTRA condemned what it called “blatant” copyright infringement enabled by the tool, while Disney sent a cease-and-desist letter accusing ByteDance of a “virtual smash-and-grab” of its intellectual property.

Despite the controversy, Seedance 2.0 also marked a notable milestone in large-scale production. China’s 2026 Spring Festival Gala became the world’s first publicly released project to extensively use the model, contributing AI-generated visual content to several of its segments. ByteDance had planned a global rollout for mid-March 2026, but paused those plans as its engineers and lawyers worked to address intellectual property concerns raised by Hollywood studios.

Neural rendering techniques use AI to create highly detailed textures and lighting effects that would be prohibitively expensive using traditional methods. These tools democratize high-end visual effects, making sophisticated techniques accessible to smaller productions and independent filmmakers. The technology continues to evolve rapidly, with new applications emerging constantly.

Where Cinema Magic Goes From Here

The evolution of movie special effects reflects our endless desire to see the impossible made real. From hand-painted glass plates to algorithms that generate entire performances, each technological leap has expanded the vocabulary of cinematic storytelling. Today’s filmmakers have access to tools that would seem like science fiction to the pioneers who first experimented with stop-motion and matte paintings, yet the fundamental goal remains unchanged: transporting audiences to worlds beyond their everyday experience.

As we look toward the future, the integration of AI, virtual production, and yet-unimagined technologies promises even more spectacular possibilities. The next generation of special effects artists will blend traditional craftsmanship with cutting-edge technology, creating visual experiences we can barely imagine today. What new worlds will they show us? Only time will tell, but if history teaches us anything, it’s that the only limit is human imagination.